Contributed by: Arun K Sharma

Imagine a large law firm takes over a smaller law firm and tries to identify the documents corresponding to different types of cases such as civil or criminal cases which the smaller firm has dealt or is currently dealing with. The presumption is that the documents are not already classified by the smaller law firm. An intuitive way of identifying the documents in such situations is to look for specific sets of keywords and based on the sets of keywords found, identify the type of the documents. In Natural Language Processing (NLP), this task is referred to as topic modelling. Here, the term ‘topic’ refers to a set of words that come to mind when we think of a topic. For instance, when we think of ‘entertainment’- the topic, the words that come to the mind are ‘movies’, ‘sitcoms’, ‘web series’, ‘Netflix’, ‘YouTube’ and so on. In our example of legal documents for the law firm, a set of words such as ‘property’, ‘litigation’ and ‘tort’ help identify that the document is related to a ‘real-estate’ (topic) case. A model trained to automatically discover topics appearing in documents is referred to as a topic model.

At this point, it is important to note that topic modelling is not the same as topic classification. Topic classification is a supervised learning approach in which a model is trained using manually annotated data with predefined topics. After training, the model accurately classifies unseen texts according to their topics. On the other hand, topic modelling is an unsupervised learning approach in which the model identifies the topics by detecting the patterns such as words clusters and frequencies. The outputs of a topic model are;

1) clusters of documents that the model has grouped based on topics and

2) clusters of words (topics) that the model has used to infer the relations.

The above discussion hints at a couple of underlying assumptions in topic modelling; 1) the distributional assumption and the statistical mixture assumption. The distributional assumption indicates that similar topics make use of similar words, and the statistical mixture assumption indicates that each document deals with several topics. Simply put, for a given corpus of documents, each document can be represented as a statistical distribution of a fixed set of topics. The role of the topic model is to identify the topics and represent each document as a distribution of these topics.

Some of the well-known topic modelling techniques are Latent Semantic Analysis (LSA), Probabilistic Latent Semantic Analysis (PLSA), Latent Dirichlet Allocation (LDA), and Correlated Topic Model (CTM). In this article, we will focus on LDA, a popular topic modelling technique.

Latent Dirichlet Allocation (LDA)

Before getting into the details of the Latent Dirichlet Allocation model, let’s look at the words that form the name of the technique. The word ‘Latent’ indicates that the model discovers the ‘yet-to-be-found’ or hidden topics from the documents. ‘Dirichlet’ indicates LDA’s assumption that the distribution of topics in a document and the distribution of words in topics are both Dirichlet distributions. ‘Allocation’ indicates the distribution of topics in the document.

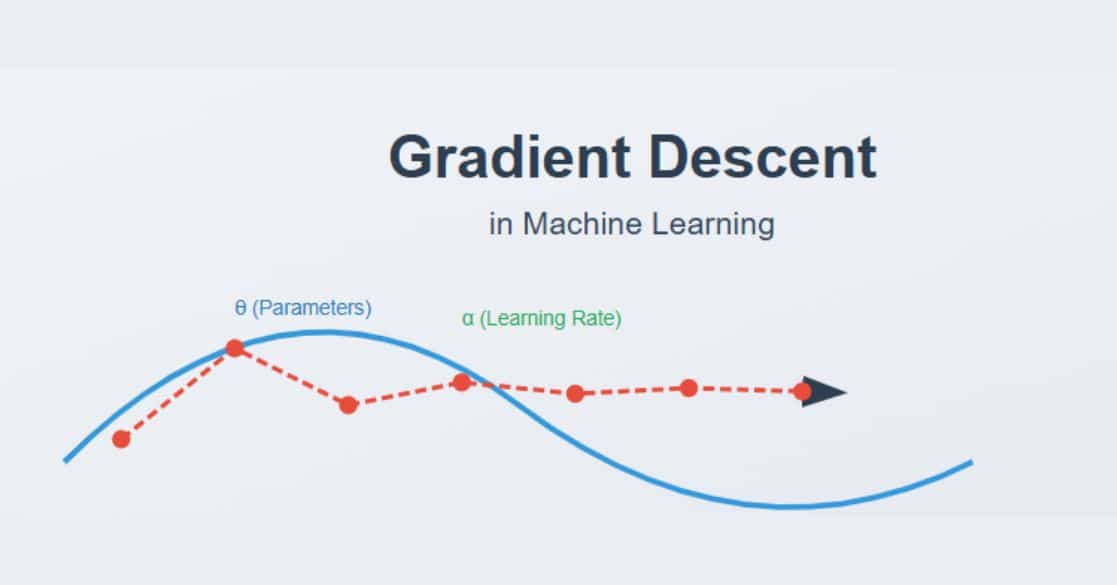

LDA assumes that documents are composed of words that help determine the topics and maps documents to a list of topics by assigning each word in the document to different topics. The assignment is in terms of conditional probability estimates as shown in figure 2. In the figure, the value in each cell indicates the probability of a word wj belonging to topic tk. ‘j’ and ‘k’ are the word and topic indices respectively. It is important to note that LDA ignores the order of occurrence of words and the syntactic information. It treats documents just as a collection of words or a bag of words.

Once the probabilities are estimated (we will get to how these are estimated shortly), finding the collection of words that represent a given topic can be done either by picking top ‘r’ probabilities of words or by setting a threshold for probability and picking only the words whose probabilities are greater than or equal to the threshold value. For instance, if we focus on topic-1 in figure 2 and pick top 4 probabilities assuming that the probabilities of the words not shown in the table are less than 0.012, then topic-1 can be represented as shown below using the ‘r’ top probabilities words approach.

In the above example, if word-k, word1, word3 and word2 are respectively trees, mountains, rivers and streams then topic-1 could correspond to ‘nature’.

One of the important inputs to LDA is the number of expected topics in the documents. In the above example if we set the expected topics to 3, each document can be represented as shown below.

In the above representation, , and are the three weights for topics: topic-1, topic-2 and topic-3 respectively for a given document . indicates the proportion of words in document that represent topic-1, indicates the proportion of words in document that represent topic-2 and so on.

LDA Algorithm

LDA assumes that each document is generated by a statistical generative process. That is, each document is a mix of topics, and each topic is a mix of words. For example, figure 3 shows a document with ten different words. This document could be assumed to be a mix of three topics; tourism, facilities and feedback. Each of these topics, in turn, is a mix of different collections of words. In the process of generating this document, first, a topic is selected from the document-topic distribution and later, from the selected topic, a word is selected from the multinomial topic-word distributions.

While identifying the topics in the documents, LDA does the opposite of the generation process. The general steps involved in the process are shown in figure 4. It's important to note that LDA begins with random assignment of topics to each word and iteratively improves the assignment of topics to words through Gibbs sampling.

Let’s understand the process by taking a simple example. Let’s consider a corpus of ‘m’ documents with five words vocabulary as shown in figure 5. The steps in the process of resampling are shown in figure 5.

Hyper parameters in LDA

LDA has three hyper parameters;

1) document-topic density factor ‘α’, shown in step-7 of figure 5,

2) topic-word density factor ‘β’, shown in step-8 of figure-5 and

3) the number of topics ‘K’ to be considered.

The ‘α’ hyperparameter controls the number of topics expected in the document. Low value of ‘α’ is used to imply that fewer number of topics in the mix is expected and a higher value implies that one would expect the documents to have higher number topics in the mix.

The ‘β’ hyper parameter controls the distribution of words per topic. At lower values of ‘β’, the topics will likely have fewer words and at higher values topics will likely have more words.

Ideally, we would like to see a few topics in each document and few words in each of the topics. So, α and β are typically set below one.

The ‘K’ hyperparameter specifies the number of topics expected in the corpus of documents. Choosing a value for K is generally based on domain knowledge. An alternate way is to train different LDA models with different numbers of K values and compute the ‘Coherence Score’ (to be discussed shortly). Choose the value of K for which the coherence score is highest.

Coherence score/ Topic Coherence score

Topic coherence score is a measure of how good a topic model is in generating coherent topics.

A coherent topic should be semantically interpretable and not an artifact of statistical inference. A higher coherence score indicates a better topic model. Reference ‘Automatic Evaluation of Topic Coherence’ discusses this topic to a larger depth.

Data preprocessing for LDA

The typical preprocessing steps before performing LDA are

1) tokenization,

2) punctuation and special character removal,

3) stop word removal and

4) lemmatized.

Note that additional preprocessing may be required based on the quality of the data.

LDA in python:

There are few python packages which can be used for LDA based topic modeling. The popular packages are Genism and Scikit-learn. Amongst the two packages, Gensim is the top contender. ‘Topic Modeling with Gensim’ is a good reference to learn about using the Gensim package for performing LDA. ‘Introduction to topic modeling’ serves as a good reference for using Scikit-learn package to perform LDA

Some real-world novel applications of LDA

LDA has been conventionally used to find thematic word clusters or topics from in text data. Besides this, LDA has also been used as components in more sophisticated applications. Some of the applications are shown below.

- Cascaded LDA for taxonomy building [6]: An online content generation system to organize and manage a lot of contents. Details of this application are provided in Smart Online Content Generation System.

- LDA based recommendation system [7]: a recommendation engine for books based on their Wikipedia articles. Details of this application are provided in the LDA based recommendation engine.

- Classification of Gene expression [8]: Understand the role of differential gene expression in cancer etiology and cellular process Details of this application is provided in A Novel Approach for Classifying Gene Expression Data using.

This brings us to the end of the blog on Latent Dirichlet Allocation. We hope you found this helpful, if you wish to learn more such concepts, upskill with Great Learning Academy's free online courses.