Before we move on to the complicated concepts, let us quickly understand Text Summarization in Python. Here is the definition for the same.

"Automatic text summarization is the task of producing a concise and fluent summary while preserving key information content and overall meaning"-Text Summarization Techniques: A Brief Survey

Need for Text Summarization Python

Various organisations today, be it online shopping, private sector organisations, government, tourism and catering industry, or any other institute that offers customer services, are all concerned with learning their customer's feedback each time their services are utilized. Now, consider that these companies receive an enormous amount of feedback and data daily. It becomes quite a tedious task for the management to analyze these data points and develop insights.

However, we have reached a point in technological advancements where technology can help with tasks, and we do not need to perform them ourselves. One such field that makes this happen is Machine Learning. Machines have become capable of understanding human language with the help of NLP or Natural Language Processing. Today, research is being done with the help of text analytics.

One application of text analytics and NLP is Text Summarization. Text Summarization Python helps in summarizing and shortening the text in user feedback. It can be done with the help of an algorithm that can help reduce the text bodies while keeping their original meaning intact or by giving insights into their original text.

Two different approaches are used for Text Summarization

- Extractive Summarization

- Abstractive Summarization

Extractive Summarization

In Extractive Summarization, we identify essential phrases or sentences from the original text and extract only these phrases from the text. These extracted sentences would be the summary.

Abstractive Summarization

We work on generating new sentences from the original text in the Abstractive Summarization approach. The abstractive method contrasts the approach described above, and the sentences generated through this approach might not even be present in the original text.

We are going to focus on using extractive methods. This method functions by identifying meaningful sentences or excerpts from the text and reproducing them as part of the summary. In this approach, no new text is generated; only the existing text is used in the summarization process.

Steps for Implementation

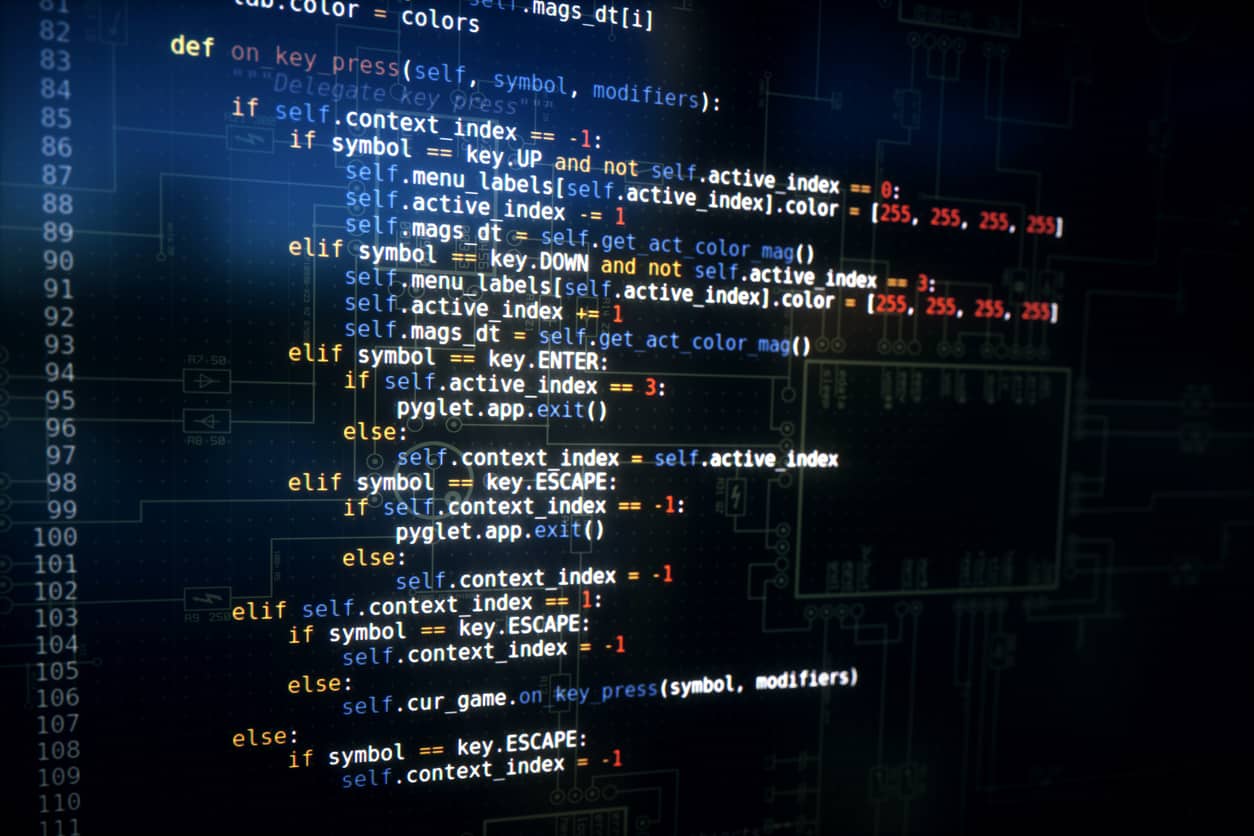

Step 1: The first step is to import the required libraries. Two NLTK libraries are necessary for building an efficient text summarizer.

from nltk.corpus import stopwords

from nltk.tokenize import word_tokenize, sent_tokenize

Terms Used:

- Corpus

A collection of text is known as Corpus. This could be data sets such as bodies of work by an author, poems by a particular poet, etc. To explain this concept in the blog, we will use a data set of predetermined stop words. - Tokenizers

This divides a text into a series of tokens. Tokenizers have three primary tokens - sentence, word, and regex tokenizer. We will be using only the word and the sentence tokenizer.

Step 2: Remove the Stop Words and store them in a separate array of words.

Stop Words

Words such as is, an, a, the, and 'for' do not add value to the meaning of a sentence. For example, let us take a look at the following sentence:

GreatLearning is one of the most valuable websites for ArtificialIntelligence aspirants.

After removing the stop words in the above sentence, we can narrow the number of words and preserve the meaning as follows:

['GreatLearning', 'one', 'useful', 'website', 'ArtificialIntelligence', 'aspirants', '.']

Step 3: We can then create a frequency table of the words.

A Python Dictionary can record how many times each word will appear in the text after removing the stop words. We can use this dictionary over each sentence to know which sentences have the most relevant content in the overall text.

stopwords = set (stopwords.words("english"))

words = word_tokenize(text)

freqTable = dict()

Step 4: We will assign a score to each sentence depending on the words it contains and the frequency table.

Here, we will use the sent_tokenize() method that can be used to create the array of sentences. We will also need a dictionary to keep track of the score of each sentence, and we can later go through the dictionary to create a summary.

sentences = sent_tokenize(text)

sentenceValue = dict()

Step 5: Assign a score to compare the sentences within the text.

A straightforward approach that can be used to compare the scores is to find an average score of a particular sentence, which can be a reasonable threshold.

sumValues = 0

for sentence in sentenceValue:

sumValues += sentenceValue[sentence]

average = int(sumValues / len(sentenceValue))

Apply the threshold value and the stored sentences in order into the summary.

Output Summary

This brings us to the end of the blog on Text Summarization Python. We hope that you were able to learn more about the concept. If you wish to learn more about such concepts, take up the Python for Machine Learning free online course Great Learning Academy offers.

Also Read:

Python Tutorial For Beginners – A Complete Guide

Python Developer Resume Samples

Python Interview Questions and Answers