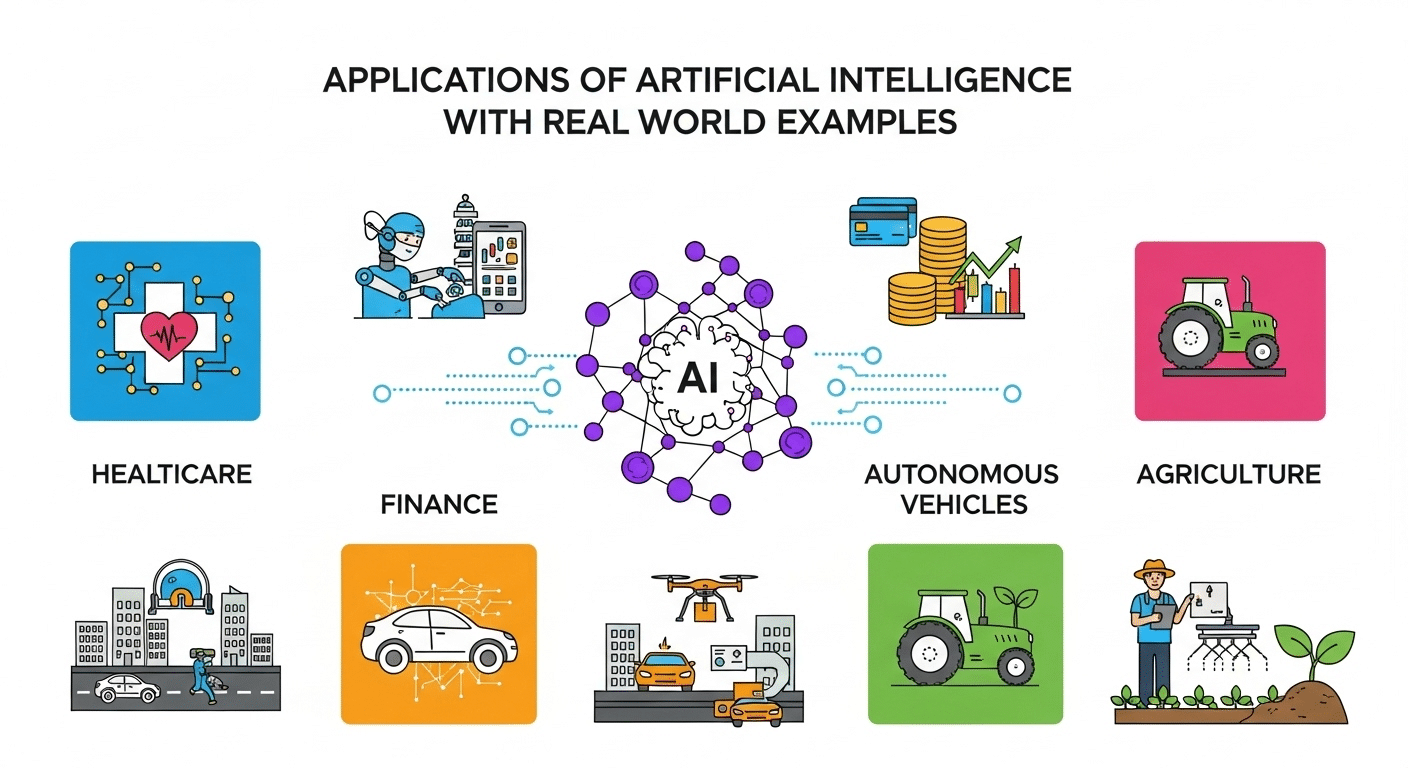

Detecting fraud, recommending songs, optimizing logistics, providing translations. Intelligent systems are transforming our lives for the better. Artificial intelligence has already become an important instrument across all sectors.

In the last few years, artificial intelligence has evolved at a great pace. Yet, this year, there is still debate about what constitutes “AI ethics” and which ethical requirements, technical standards and best practices are needed for its realization.

At the ‘common good in the digital age’ tech conference recently held in Vatican City, Pope Francis said, “If mankind’s so-called technological progress were to become an enemy of the common good, this would lead to an unfortunate regression to a form of barbarism dictated by the law of the strongest.”

Human Agency and oversight

Respect for human autonomy and fundamental rights must be at the heart of AI ethics rules. The European Union guidelines prescribe three measures to ensure that this requirement is reflected in the system, they are as follows-

1. To make sure that an AI system does not hamper fundamental rights, a fundamental rights impact assessment should be undertaken prior to its development.

2. Human agency should be ensured, meaning users should understand and interact with AI systems to a satisfactory degree.

3. A machine can never be in complete control, therefore, there should always be human oversight. Humans should always have the possibility to ultimately override a decision made by a system. For instance, provide a stop button or a procedure to abort an operation to ensure human control.

Technical robustness and safety

Trustworthy AI requires algorithms to be secure, reliable and robust enough to deal with inconsistencies during all life-cycle stages of an AI system. This is to ensure cyber-security. This demands testing AI software systems to understand and mitigate the risks of cyber-attacks and hacking. These software systems must be protected against exploitation by adversaries such as data poisoning and hacking. If systems are hacked, it would cause a lot of damage and may lead to a system shutdown. These issues may also cause data to get corrupted or cause misuse of data, thus, a threat to security.

Read also: Top Application of AI

PG Program in AI & Machine Learning

Master AI with hands-on projects, expert mentorship, and a prestigious certificate from UT Austin and Great Lakes Executive Learning.

Privacy and data protection

The AI community should ensure privacy and personal data must be protected, both when building and running an AI system. People should have complete control over their own data, if there is a breach of personal data, it could be extremely dangerous for the people involved. And once trust is lost due to reasons as major as privacy breach, it would be difficult to retrieve yourself. AI developers should apply design techniques such as data encryption to guarantee privacy and data protection. Moreover, the quality of data must be certain, i.e, avoid errors, biases and mistakes.

Transparency

Transparency is paramount. To ensure transparency, one way is to document the datasets and processes that are used in building AI systems. AI systems should be identifiable as such. Humans should be aware that they are interacting with an AI system. Furthermore, AI system’s actions and decisions are subject to the principle of explainability and meaning; it should be possible for them to be understood and traced by humans. A number of companies, researchers and citizen advocates recommend that government regulation is a good way to maintain transparency and through this, human accountability as well. Others argue that this may slow down the rate of innovation. The United Nations, European Union and many countries are constantly working towards finding strategies to regulate transparency.

Read also: Top AI Companies of 2019 and their success stories

Diversity, non-discrimination and fairness

To avoid unfair bias, AI developers should make sure that the design of algorithms is not biased. People that may be directly or indirectly affected by AI systems should be consulted and involved in development and implementation. AI systems should be devised accounting for the whole range of human abilities, skills and requirements and ensure accessibility to persons with disabilities.

Societal and environmental well-being

AI systems should enhance positive social change and encourage sustainability. In other words, measures securing the environmental friendliness of AI systems should be encouraged, for instance, opting for less harmful energy consumption methods. Impact of these systems on people’s physical and mental well being must be considered and monitored as well. Moreover, the effects on society and democracy should be assessed. AI Ethics is a good way to ensure that we are not causing further damage to the environment than what already exists. In a world where people are fighting for climate justice, it is important to keep in mind that we shouldn't cause further damage.

Accountability

Mechanisms should be in place to ensure responsibility and accountability for AI systems and their outcomes. Internal and external audits should be put in place. Reporting of the negative impact of the AI system and impact assessment tools should be available. And organisations should be in a position where they are able to safeguard their customers personal data and be accountable in case a technology failure takes place. It is important to ensure human rights and dignity. Machine Learning Algorithms have a very unpredictable nature and it can be difficult to anticipate the effect it can have on an individual, this challenges the traditional concept of accountability. Thus, AI ethics should be followed when we are adopting AI into our business.

Upskill with our PGP - Artificial Intelligence and Machine Learning course to learn more about the topic!