The growing demand for artificial intelligence (AI) has fundamentally shifted the modern business era. Current data reveals that 69% of professionals believe their jobs are being impacted by technology, especially AI.

Despite this disruption, optimism remains remarkably high, with 78% of professionals feeling positive about the potential impact of AI on their careers.

However, as investments in generative and predictive models skyrocket, organizations face a critical challenge: separating tangible financial returns from technological hype.

Executives often struggle to determine if they are investing in long-term value or simply following a trend. This prompts the critical question of whether companies are overhyping AI adoption without real ROI.

To truly capitalize on these tools, businesses must transition from experimental pilots to sustainable, ROI-driven ecosystems. Let's explore deeper:

Why AI ROI Is So Hard to Measure?

Measuring the Return on Investment (ROI) for artificial intelligence projects is complex compared to traditional software deployments.

Unlike standard IT upgrades, AI systems evolve, learn, and often impact the organization in ways that are not immediately quantifiable.

- Intangible Benefits vs. Direct Revenue Impact:

Traditional software provides clear operational outputs. AI, however, often drives intangible benefits like enhanced customer satisfaction, improved employee morale, or better strategic forecasting. Translating a 15% increase in customer sentiment into a direct dollar amount is inherently difficult.

- Long Gestation Periods of AI Projects:

AI solutions require significant time for data gathering, model training, validation, and continuous fine-tuning. Positive ROI is rarely immediate. Stakeholders must be prepared for a longer runway before the algorithm begins to generate measurable value.

- Cross-Functional Dependencies:

A successful AI deployment is never siloed. It requires seamless collaboration between data engineers, IT infrastructure teams, compliance officers, and business unit leaders. If one dependency fails, the entire project's ROI suffers.

- Hidden Costs:

The sticker price of an AI tool is only a fraction of the Total Cost of Ownership (TCO). Hidden expenses quickly erode ROI:

- Data cleaning and preparation: Algorithms require pristine data. Preparing this data is highly labor-intensive.

- Infrastructure and cloud costs: Training machine learning models, especially Large Language Models (LLMs), demands massive computational power and expensive cloud storage.

- Talent acquisition: Hiring highly specialized Data Scientists and ML Engineers drives up project costs significantly.

To outwit this complexity, professionals must discern what to learn vs what's hype as AI becomes mainstream. Moreover, understanding the foundational mechanics is crucial, and utilizing resources like Free AI For Leaders Course or exploring AI Product management can equip teams to accurately forecast these hidden complexities.

Common Red Flags in AI ROI Claims

When evaluating vendor pitches or internal project proposals, leaders must maintain a healthy skepticism. Inflated claims often obscure the true business value of an AI implementation.

- Over-Reliance on Vanity Metrics: Vendors frequently highlight metrics like model accuracy (e.g., "99% accuracy rate") or processing speed. While technically impressive, high accuracy does not automatically equate to cost savings or revenue generation.

- No Baseline Comparison: A claim that an AI tool saves 100 hours a week is meaningless if the organization does not know how many hours were previously spent on the task or how the saved hours are being utilized. A lack of rigorous "before vs. after" data is a major red flag.

- Ignoring Operational Costs: An AI solution might increase sales revenue by 5%, but if the cloud computing costs required to run the model consume 6% of revenue, the net ROI is negative. Always look for claims that account for continuous operational overhead.

- "Pilot Success" Projected as Enterprise-Scale ROI: A model that works perfectly on a clean, localized dataset often breaks down when exposed to the messy, unstructured data of an entire enterprise. Scaling success is never perfectly linear.

- Lack of Clear Business KPIs: If an AI initiative cannot be tied back to a core business objective, such as churn reduction or inventory optimization, it is likely a vanity project. For example, using AI to automate reporting should directly tie to reduced labor costs or faster decision cycles.

To rigorously audit these claims, professionals should understand the technical lifecycle of these tools, a competency covered thoroughly in courses defining AI Product Manager Roles, Skills, and Responsibilities.

Key Metrics That Actually Matter

To cut through the noise, organizations must categorize their AI evaluations into clear, measurable buckets that align directly with corporate objectives.

- Financial Metrics:

- Revenue Uplift: Increases in cross-selling opportunities, higher conversion rates, and optimized pricing strategies.

- ROI Formula: The ultimate benchmark remains ROI = (Net Gain from Investment – Cost of Investment) / Cost of Investment.

- Cost Savings: Reduction in human capital expenditures, lowered operational overhead, and decreased hardware costs.

- Operational Metrics:

- Process Efficiency Improvements: Measuring the reduction of bottlenecks in workflows.

- Time Saved: Quantifying the exact hours reclaimed from manual, repetitive tasks.

- Error Reduction: Tracking the decrease in human errors, particularly in compliance, data entry, and manufacturing.

- Strategic Metrics:

- Customer Experience Improvement: Tracking Net Promoter Scores (NPS) and customer retention rates pre- and post-implementation.

- Decision-Making Speed: Assessing how quickly leadership can act on predictive insights. For instance, AI generative uses for business intelligence success often dramatically compress reporting timelines.

- Competitive Advantage: Evaluating market share gains directly attributable to faster, AI-driven product iterations.

To grasp how these strategic metrics apply to client interactions, the AI and Customer Journey Essentials course offers excellent concepts and foundational knowledge.

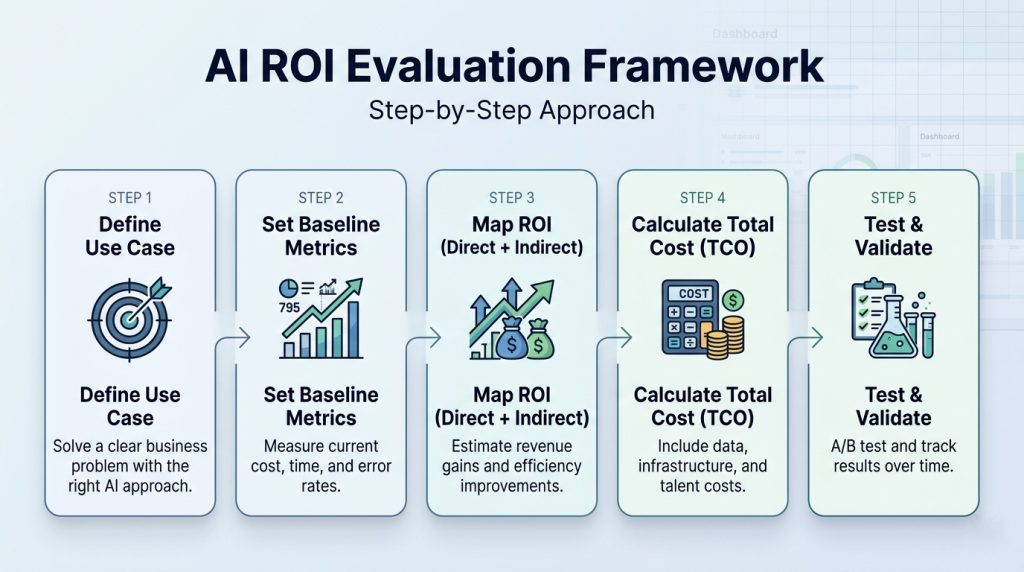

Framework to Evaluate AI ROI (Step-by-Step)

To effectively measure the financial and operational returns of your artificial intelligence initiatives, you must follow a step-by-step evaluation framework.

Step 1: Define the Business Problem and AI Use Case Clearly

Before investing in any technology, you must isolate a highly specific business bottleneck. Avoid the trap of deploying Large Language Models (LLMs) or neural networks simply to appear innovative.

- Conduct a Needs Analysis: Identify if your problem requires predictive analytics (forecasting sales), natural language processing (customer support), or computer vision (quality control).

- Map Capabilities to Objectives: Ensure the selected algorithm directly addresses the isolated bottleneck. If you struggle to translate overarching business goals into actionable technical requirements, you might choose the wrong AI model for your operations.

- Determine Feasibility: Assess whether you have the necessary data quality to support this specific use case before proceeding to the next step.

Step 2: Establish Quantitative Baseline Metrics

You cannot calculate an accurate return on investment without a precise understanding of your current operational costs and performance levels.

- Audit Current Workflows: Document the exact human hours currently spent on the processes you intend to optimize. This is crucial before automating routine tasks with AI so that you have a definitive "before" and “after” snapshot.

- Quantify Error Rates: Record the current frequency of manual errors, customer churn rates, or manufacturing defects.

- Set the Benchmark: Establish these pre-AI figures as your definitive baseline. Any future performance generated by the AI model will be subtracted from this baseline to calculate your absolute gain.

Step 3: Map Direct vs. Indirect ROI Trajectories

AI generates value across multiple spectrums. You must categorize these returns to build a comprehensive financial case.

- Forecast Direct ROI: Calculate the projected hard financial gains. This includes expected revenue uplift from AI-driven cross-selling and direct cost reductions from decreased software licensing or manual labor requirements.

- Forecast Indirect ROI: Assign proxy values to intangible benefits. Estimate the financial impact of improved employee bandwidth, accelerated strategic decision-making, and enhanced customer satisfaction scores (CSAT).

Step 4: Calculate the Comprehensive Total Cost of Ownership (TCO)

The initial purchase or licensing price of an AI tool is only a fraction of its true cost. You must meticulously calculate the TCO to prevent hidden expenses from destroying your ROI.

- Compute Data Costs: Budget for the extensive hours required for data extraction, cleaning, and labeling. AI models require pristine data pipelines to function.

- Calculate Infrastructure Overhead: Factor in the ongoing costs of cloud storage, API tokens, and the intense GPU compute power required to train and run machine learning models.

- Account for Talent Acquisition: Factor in the premium salaries required to hire Data Scientists, ML Ops Engineers, and specialized analysts needed to maintain the system.

Step 5: Execute Structured Testing and Define Timeframes

Never deploy an AI model enterprise-wide without rigorous, isolated testing to validate your ROI projections.

- Implement A/B Testing: Run your new AI model (the variant) simultaneously against your traditional human workflow (the control). Compare the output quality and speed directly.

- Establish a Realistic Runway: Acknowledge that machine learning models require a "burn-in" period. Set distinct timelines for when you expect short-term operational efficiencies versus long-term strategic revenue gains.

Professionals are already adapting to these workflows; 80% of professionals report that they use GenAI to learn new skills, with 60% saying they use it in their work 'always' or 'frequently'.

To lead this charge, the Duke Chief Artificial Intelligence Officer Program is a premier choice. This program equips leaders with actionable frameworks to identify high-impact AI opportunities, manage complex digital transformations, and navigate the ethical and operational challenges of scaling AI ecosystems globally.

Furthermore, engaging in specialized training like AI for Business Innovation: From GenAI to PoCs ensures your framework transitions seamlessly from theory to viable product.

AI for Business: From GenAI to POCs

Learn how AI drives business innovation, from GenAI to POCs. Gain practical skills to implement AI solutions and lead in your industry.

Case Examples: Real vs Inflated AI ROI

Analyzing practical applications helps clarify the boundaries between realistic returns and inflated projections.

Example 1: Fraud Detection System (Clear ROI)

A financial services firm deploys a machine learning-based fraud detection system. Pre-implementation fraud losses are documented at $4.2M annually. Post-deployment, losses drop to $1.1M. With a $600K TCO, the net ROI is measurable, attributable, and defensible. This is textbook AI ROI: clear baseline, direct cost saving, documented causal link.

Example 2: Chatbot Implementation (Mixed ROI)

A telecom operator deploys a conversational AI chatbot to deflect inbound support calls. Pilot metrics show 65% deflection. However, at enterprise scale, deflection falls to 38% due to query complexity and integration gaps. Unaccounted escalation costs and customer dissatisfaction partially erode projected savings. ROI is positive but significantly overstated in the business case.

Example 3: AI Personalization (Long-Term ROI, Harder to Measure)

A retail brand uses a recommendation engine to personalize digital experiences. Direct attribution is complicated by multi-touch customer journeys and seasonality. ROI emerges over 18–24 months through customer retention uplift and average order value increase. This is a legitimate but illiquid investment, one that requires patience and robust attribution modeling to evaluate.

What separates the first and third examples is not technology; it is the rigor of the business case.

If your team is at the stage of moving from idea to proof of concept, the premium AI for Business Innovation: From GenAI to POCs course from Great Learning provides a structured approach to validating AI use cases before full investment, reducing the risk of committing resources to initiatives that cannot demonstrate clear P&L impact at scale.

AI for Business: From GenAI to POCs

Learn how AI drives business innovation, from GenAI to POCs. Gain practical skills to implement AI solutions and lead in your industry.

Building an AI-First Yet ROI-Driven Culture

Technology alone does not deliver AI ROI. The organizational environment must be deliberately shaped to convert AI capability into business outcomes.

1. Educating Leadership Beyond Buzzwords

Executives who understand only the surface-level promise of AI, without grasping concepts like model bias, data governance, and inference costs, are poorly equipped to sponsor or evaluate AI programs. The core AI skills that leaders must master represent the minimum viable fluency for sponsoring high-stakes AI investments that lead to better growth and higher ROI.

2. Setting Realistic Expectations

AI is not a silver bullet. Setting over-optimistic timelines or ROI projections is a primary driver of stakeholder disillusionment. Build ROI cases conservatively and revisit them quarterly.

3. Investing in the Right Talent

Sustainable AI ROI requires a human capital strategy. Organizations must invest in data scientists, ML engineers, MLOps practitioners, and AI product managers, roles that are in growing demand globally.

The growing demand for AI talent continues to outpace supply, making in-house upskilling a competitive advantage. Moreover, cloud infrastructure literacy is also becoming a non-negotiable for leaders overseeing AI budgets.

As AWS continues to dominate enterprise AI infrastructure, the premium AWS Generative AI for Leaders course from Great Learning equips decision-makers with the vocabulary, frameworks, and cost models needed to evaluate cloud-based AI investments intelligently, without being wholly dependent on technical teams for financial oversight.

AWS Generative AI Course for Leaders

Learn how AWS tools support faster work, stronger decisions, and business growth. Build confidence with generative tech and apply it for measurable results.

4. Creating Feedback Loops

Establish continuous feedback mechanisms between AI system outputs and downstream business KPIs. Model performance dashboards should be reviewed alongside P&L data, not in isolation within a technical team.

To champion this cultural transformation, the Artificial Intelligence Course for Managers & Leaders is highly recommended. This comprehensive course empowers non-technical managers to confidently evaluate AI vendor proposals, spearhead data-driven initiatives, and align technical teams with overarching business goals, ensuring every AI project has a direct line of sight to profitability.

Post Graduate Program in Artificial Intelligence for Leaders

Turn insights into action—use AI to make faster decisions, optimize teams, and lead digital transformation.

Tools and Techniques to Track AI ROI Effectively

Organizations serious about AI ROI measurement should deploy the following techniques:

- A/B Testing for AI Models: Randomized controlled experiments that compare AI-assisted outcomes against a control group establish causal attribution, the gold standard for ROI measurement.

- KPI Dashboards: Centralized dashboards that align AI operational metrics (prediction accuracy, throughput) with business KPIs (cost per unit, revenue per customer) in real time.

- Attribution Models: Multi-touch attribution models that distribute business value across the AI system, human decision-making, and external factors, preventing both over-crediting and under-crediting AI.

- Cost-Benefit Tracking Systems: Continuous tracking of TCO against realized benefits, updated at least quarterly.

Conclusion

Evaluating AI ROI and identifying sustainable implementation strategies requires organizations to look past the industry hype and focus strictly on tangible business value.

By establishing clear baseline metrics, acknowledging the total cost of ownership, and demanding rigorous "before and after" data, businesses can safeguard their investments.

Ultimately, transitioning from isolated AI experiments to enterprise-wide, ROI-positive ecosystems demands a culture that values continuous learning, strategic patience, and relentless financial accountability.