- Great Learning

- Free Courses

- Machine Learning

Earn a certificate & get recognized

Introduction to XGBoost

Dive into the world of XGBoost with our complimentary course! Acquire in-depth knowledge and enhance your data science skills. Enroll now for a transformative learning experience!

Introduction to XGBoost

698 learners enrolled so far

Stand out with an industry-recognized certificate

10,000+ certificates claimed, get yours today!

Get noticed by top recruiters

Share on professional channels

Globally recognised

Land your dream job

Skills you will gain

Python skills

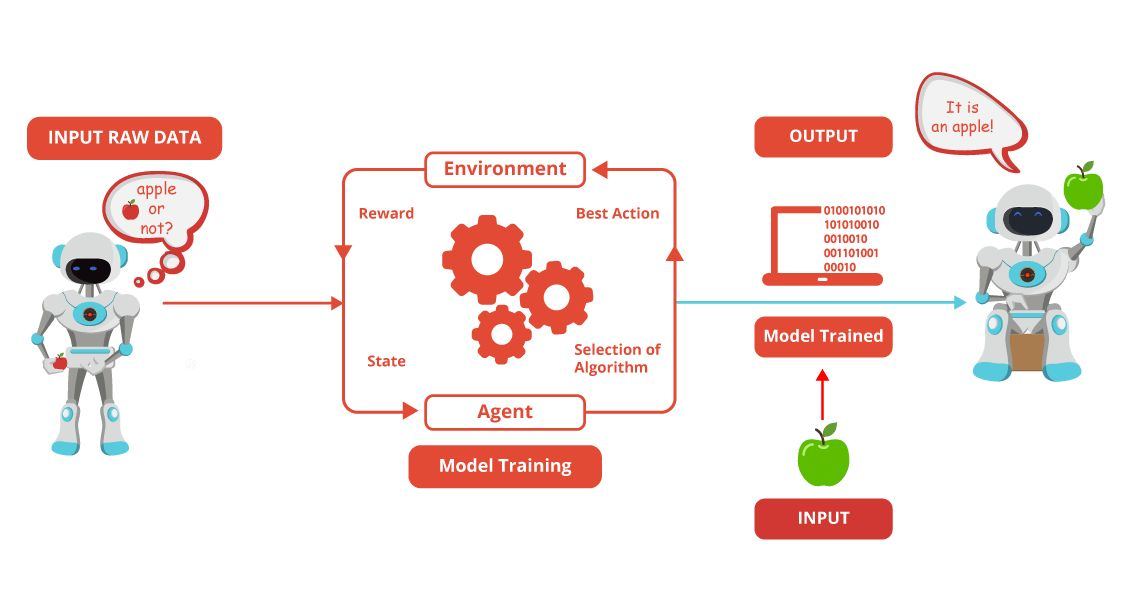

Basic ML concepts

Key Highlights

Get free course content

Master in-demand skills & tools

Test your skills with quizzes

About this course

Introduction to XGBoost is a free course that begins with an overview of XGBoost, delving into its significance in machine learning. Understand the fundamentals of Gradient Boosting, the underlying technique powering XGBoost's success. Explore the intricacies of XGBoost's functionality and witness its application in real-world scenarios.

Our step-by-step guide walks you through the process of implementing XGBoost effectively. From data preparation to model evaluation, discover the critical steps involved in harnessing the power of XGBoost. Engage in hands-on activities to reinforce your learning, gaining practical experience in implementing XGBoost for predictive modeling. Elevate your machine learning skills and empower yourself with the capabilities of XGBoost in this enriching and accessible course.

Stand out with an industry-recognized certificate

10,000+ certificates claimed, get yours today!

Get noticed by top recruiters

Share on professional channels

Globally recognised

Land your dream job

Course outline

Introduction to XGBoost

In this module, we will learn about XGBoost (eXtreme Gradient Boosting) is a highly efficient and flexible gradient boosting library that is widely used for machine learning tasks, especially in structured and tabular data.

Introduction to Gradient Boosting

In this module, we will learn about Gradient Boosting which is a machine learning technique for regression, classification, and other tasks, which builds a model from weak learners (typically decision trees) in a stage-wise fashion, optimizing for accuracy.

XGBoost - Working

In this module, we will learn about the mechanics of XGBoost, such as Initialization, Loss Function, Compute Residuals, Construct a Decision Tree, etc.

Steps to Perform XGBoost

In this module, we will learn about the steps to be covered to perform XGBoost, such as Data Preparation, Setting Parameters, Training a Model, Making Predictions, Evaluation and Metrics, etc.

XGBoost Hands-on

In this module, we will learn about the practical approach that shows the step-by-step process of implementing XGBoost on a dataset.

Get access to the complete curriculum once you enroll in the course

Introduction to XGBoost

2.25 Hours

Beginner

698 learners enrolled so far

Get free course content

Master in-demand skills & tools

Test your skills with quizzes

Learner reviews of the Free Courses

5.0

What our learners enjoyed the most

Skill & tools

61% of learners found all the desired skills & tools

Frequently Asked Questions

Will I receive a certificate upon completing this free course?

Is this course free?

What prerequisites are required to enrol in this Free Introduction to XGBoost course?

You do not need any prior knowledge to enrol in this Introduction to XGBoost course.

How long does it take to complete this Free Introduction to XGBoost course?

It is a 1.5 hour long course, but it is self-paced. Once you enrol, you can take your own time to complete the course.

Will I have lifetime access to the free course?

Yes, once you enrol in the course, you will have lifetime access to any of the Great Learning Academy’s free courses. You can log in and learn whenever you want to.

Will I get a certificate after completing this Free Introduction to XGBoost course?

Yes, you will get a certificate of completion after completing all the modules and cracking the assessment.

How much does this Introduction to XGBoost course cost?

It is an entirely free course from Great Learning Academy.

Is there any limit on how many times I can take this free course?

No. There is no limit. Once you enrol in the Free Introduction to XGBoost course, you have lifetime access to it. So, you can log in anytime and learn it for free online.

Who is eligible to take this Free Introduction to XGBoost course?

You do not need any prerequisites to take the course, so enroll today and learn it for free online.

Become a Skilled Professional with Pro Courses

Gain work-ready skills with guided projects, top faculty and AI tools, all at an affordable price.

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

.jpg)

.jpg)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

Popular

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

Microsoft Courses

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

Data Science & ML

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

AI & Generative AI

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

IT & Software

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

(1).png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

Cloud Computing

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

.jpg)

.jpg)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

Management

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpeg)

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

(1).jpg)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.jpg)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

Cyber Security

View Course

Included with Pro+ Subscription

View Course

Included with Pro+ Subscription

.png)

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

.png)

View Course

Included with Pro+ Subscription

Subscribe to Academy Pro+ & get exclusive features

$29/month

No credit card required

Learn from 40+ Pro courses

Access 500+ certificates for free

700+ Practice exercises & guided projects

Prep with AI mock interviews & resume builder

Recommended Free Machine Learning courses

Similar courses you might like

Related Machine Learning Courses

-

Personalized Recommendations

Placement assistance

Personalized mentorship

Detailed curriculum

Learn from world-class faculties

50% Average salary hike -

Johns Hopkins University

Certificate Program in AI Business Strategy10 weeks · Online

Know More

-

Walsh College

MS in Artificial Intelligence & Machine Learning2 Years · Online

Know More

-

MIT Professional Education

No Code and Agentic AI12 Weeks · Online · Weekend

Learn from MIT FacultyKnow More

Relevant Career Paths >

Introduction to XGBoost

XGBoost, which stands for eXtreme Gradient Boosting, is a powerful and popular machine learning algorithm known for its efficiency and performance in a variety of tasks, particularly in structured/tabular data problems. Developed by Tianqi Chen and now maintained by the Apache Software Foundation, XGBoost has gained widespread adoption in both academic research and industry applications.

Key Features:

Gradient Boosting Framework:

XGBoost belongs to the family of ensemble learning methods, specifically gradient boosting. It builds a strong predictive model by combining the predictions of multiple weak learners (typically decision trees) sequentially. Each subsequent tree corrects the errors of the previous ones, leading to a robust and accurate model.

Regularization Techniques:

XGBoost integrates L1 (LASSO) and L2 (Ridge) regularization terms into its objective function. This helps prevent overfitting by penalizing complex models. Regularization is crucial, especially when dealing with high-dimensional data or datasets with a large number of features.

Parallel and Distributed Computing:

XGBoost is designed to be computationally efficient. It supports parallel and distributed computing, enabling faster model training, especially when dealing with large datasets. This is achieved by parallelizing the construction of each tree during the boosting process.

Tree Pruning:

To avoid overfitting, XGBoost employs a strategy known as tree pruning. It starts with a fully grown tree and then prunes the branches that do not provide significant improvements in predictive performance. This results in a more compact and effective model.

Handling Missing Data:

XGBoost has built-in capabilities to handle missing data, a common issue in real-world datasets. The algorithm automatically learns the best imputation strategy during the training process, reducing the need for extensive preprocessing of missing values.

Cross-Validation:

XGBoost includes a cross-validation feature that allows users to assess the model's performance during the training process. This helps in tuning hyperparameters and prevents overfitting by providing an unbiased estimate of the model's generalization error.

Use Cases:

Classification:

XGBoost is widely used for binary and multiclass classification problems. Its ability to handle imbalanced datasets and produce accurate predictions makes it a popular choice in scenarios like fraud detection, spam filtering, and medical diagnosis.

Regression:

In regression tasks, where the goal is to predict a continuous variable, XGBoost has demonstrated excellent performance. It is employed in areas such as predicting house prices, stock prices, and demand forecasting.

Ranking:

XGBoost is also effective in ranking problems, such as search engine result ranking or recommender systems. Its ability to capture complex relationships within data makes it well-suited for tasks involving the ordering of items.

Anomaly Detection:

The robustness of XGBoost makes it suitable for anomaly detection tasks. By identifying patterns in normal behavior, the algorithm can effectively flag instances that deviate from the expected patterns.

Community and Support:

XGBoost has a vibrant community of users and contributors. Its open-source nature has led to widespread adoption, and it is supported by various programming languages, including Python, R, Java, and others. The community actively contributes to the improvement and maintenance of the library, ensuring that it stays up-to-date with the latest developments in machine learning.

In conclusion, XGBoost has established itself as a go-to algorithm for a wide range of machine learning tasks. Its combination of efficiency, scalability, and predictive performance has made it a favorite among data scientists and machine learning practitioners. Whether in competitions like Kaggle or real-world applications, XGBoost continues to prove its effectiveness in delivering accurate and reliable predictions.