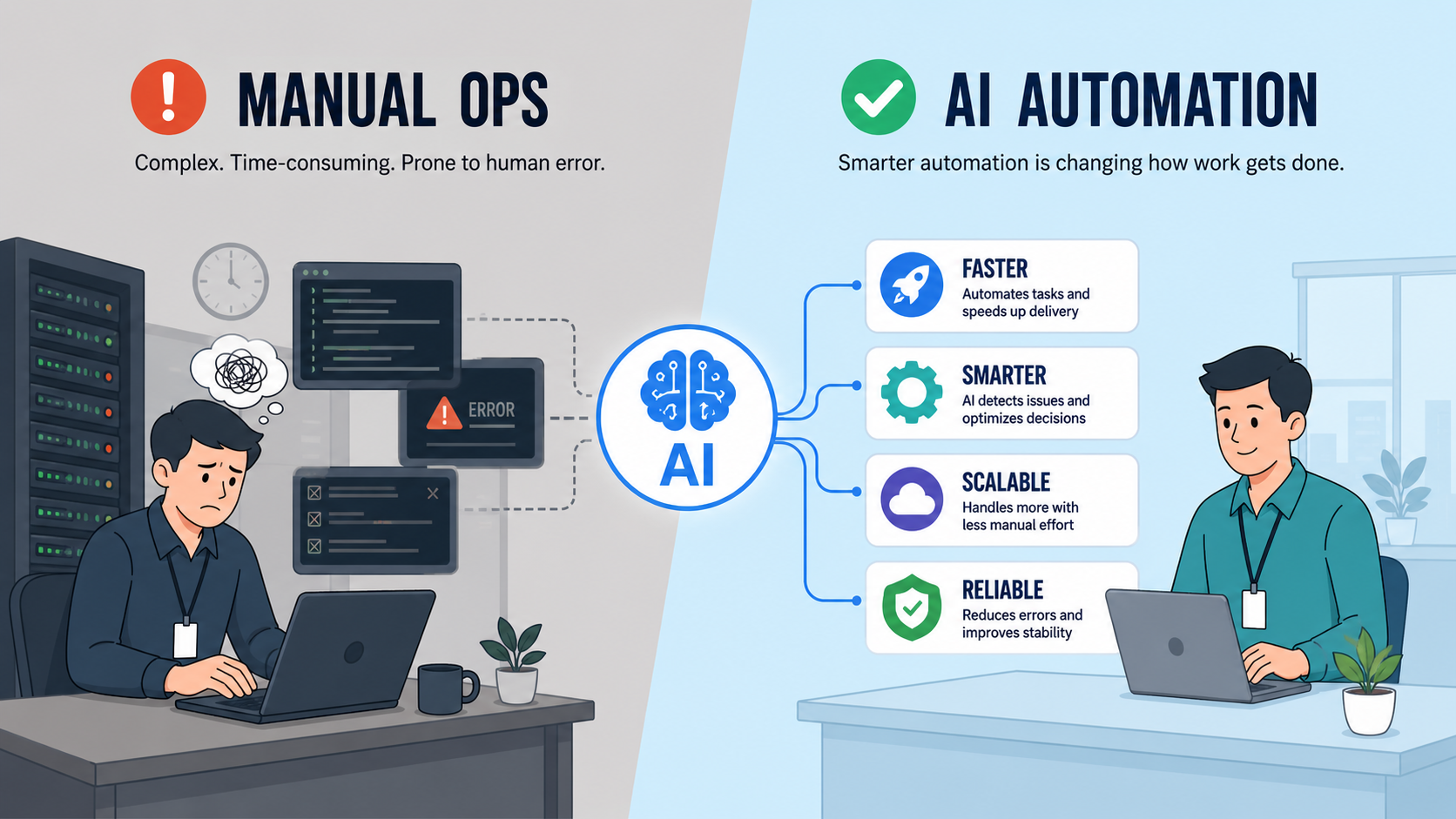

AI-powered automation is quietly taking over the repetitive, rule-based tasks that once defined DevOps and IT Operations deployments, monitoring, incident responses, and even parts of infrastructure management.

What used to require constant human intervention is now increasingly handled by intelligent systems that can predict failures, optimize performance, and self-correct in real time.

Naturally, this shift raises a pressing concern: if machines can manage systems, where does that leave the people who built their careers around managing them?

Here’s the answer: DevOps and IT Operations roles are not at risk of extinction, but they are under pressure to evolve faster than ever before.

In this blog, we’ll break down how AI is reshaping these roles, what tasks are being automated, and how professionals can reposition themselves to stay indispensable.

What Is AIOps and AI-Powered Automation in DevOps?

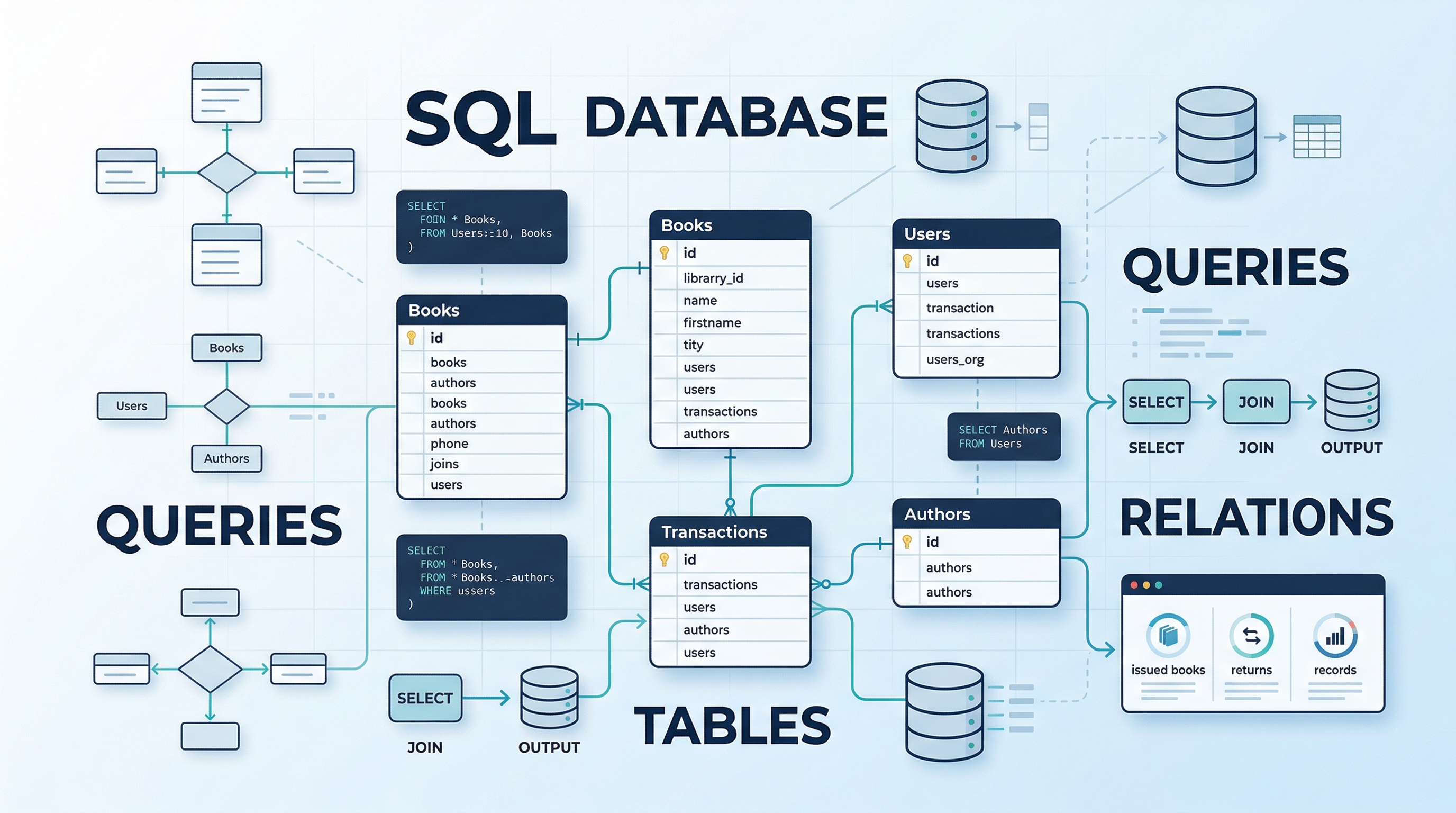

AIOps (Artificial Intelligence for IT Operations) refers to the application of machine learning (ML), natural language processing (NLP), and big data analytics to automate and enhance IT operational tasks.

It functions as the intelligence layer across the DevOps lifecycle, ingesting telemetry data, correlating signals, and enabling autonomous or semi-autonomous responses.

How AI Integrates Across the DevOps Lifecycle?

AI is not confined to a single phase; it permeates the entire software delivery chain:

- Development: Large Language Models (LLMs) such as GitHub Copilot and Amazon CodeWhisperer generate boilerplate code, write unit tests, and suggest Infrastructure as Code (IaC) templates. Pair this with generative AI for business use cases, and the productivity gains become considerable.

- Deployment: AI-driven CI/CD tools detect build failures, classify error types, and recommend rollback strategies autonomously.

- Monitoring and Observability: ML-based anomaly detection engines analyze time-series metrics, distributed traces, and log streams to detect deviations from baseline performance, often before the on-call engineer is even aware.

- Incident Management: Intelligent alert correlation reduces Mean Time to Detect (MTTD) by filtering noise and surfacing actionable signals. Auto-remediation playbooks triggered by predictive failure models are now a standard offering in platforms like PagerDuty, Dynatrace, and Moogsoft.

If you're new to this space, Great Learning's free AIOps Fundamentals course is an excellent foundation, covering AI-driven monitoring, event correlation, and operational intelligence from the ground up.

Wondering how the broader DevOps toolchain is evolving?

Explore this guide to essential tools for DevOps professionals to understand what's being augmented and what's being automated.

What Tasks Can AI Handle?

Understanding which tasks AI can reliably perform helps professionals focus their energy on higher-order responsibilities.

1. Scripting and Code Generation

AI generates boilerplate Terraform and Ansible scripts, writes CI/CD pipeline configurations (GitHub Actions, Jenkins DSL), and scaffolds IaC templates with minimal human prompting. This significantly reduces setup time and minimizes configuration drift.

- Prompt-to-pipeline generation using LLMs is already in production at several enterprises

- AI pair programmers can reduce junior-level scripting time by up to 40–50%

- Code review bots flag vulnerabilities, suggest refactors, and enforce style guides automatically

Automating infrastructure means writing better code faster. Great Learning's free Introduction to DevOps course walks you through CI/CD pipelines, containerization, and deployment automation, skills that remain foundational even as AI tools evolve.

Learn devops from basics in this free online training. DevOps course is taught hands-on from experts. Understand containerization, Docker, Jenkins, & continuous monitoring. Perfect for beginners.

2. Alert Triage and Noise Reduction

Modern observability platforms generate thousands of alerts daily. AIOps tools apply ML-based correlation to merge related events, suppress redundant notifications, and escalate only high-fidelity incidents. This directly addresses alert fatigue, one of the most persistent challenges in site reliability engineering (SRE).

3. Anomaly Detection and Predictive Monitoring

Time-series ML models such as LSTM (Long Short-Term Memory) networks detect performance anomalies and predict capacity saturation before they manifest as user-impacting outages. Predictive autoscaling, adjusting compute resources based on forecasted traffic patterns, is a direct output of this capability.

4. Routine Troubleshooting and Auto-Remediation

AI-powered runbook automation tools execute predefined resolution workflows for known failure modes, such as restarting services, clearing disk space, and rolling back deployments, without requiring human intervention. This significantly reduces Mean Time to Recover (MTTR).

5. Resource Optimization and Scaling

Cloud cost intelligence platforms powered by ML continuously right-size compute, storage, and network resources based on usage heuristics, eliminating over-provisioning and reducing cloud spend.

What Requires Human Expertise, The Irreplaceable Skills

AI is a powerful productivity multiplier, but several domains remain firmly human territory.

1. System Design and Architectural Thinking

Designing fault-tolerant, distributed systems that align with business goals requires contextual judgment, an understanding of organizational constraints and regulatory requirements, and a clear view of long-term technical debt. No ML model can contextualize a company's strategic roadmap and architect it accordingly.

2. Complex Incident Response and Decision-Making

During high-severity outages, P1 incidents with cascading failures across microservices, experienced engineers must coordinate cross-functionally, make real-time trade-offs, and apply domain-specific intuition. AI can surface data; humans must act on it.

3. Platform Engineering and Developer Experience

The rise of Internal Developer Platforms (IDPs) and golden paths curated, opinionated workflows that guide engineering teams requires deep empathy with developer needs, stakeholder communication, and iterative design. This is a fundamentally human-centered discipline.

4. Business Context and Strategic Alignment

Understanding why a system exists, prioritizing technical trade-offs against business outcomes, and aligning infrastructure decisions with organizational objectives cannot be delegated to an algorithm. IT specialists who bridge the gap between technical depth and business acumen remain exceptionally valuable. See this IT specialist guide for a broader view of the role.

5. Governance, Security, and Ethical Oversight

AI-driven automation is only as trustworthy as its guardrails. Human oversight is essential to validate auto-remediation actions, audit ML model decisions for unintended bias, and ensure compliance with data protection regulations. AI in DevSecOps pipelines must be governed, not blindly trusted.

New Skills DevOps and IT Operations Professionals Must Learn

The Upskilling Trends Report 2025–26 reveals that 81% of professionals intend to pursue upskilling this year, and 44% have identified Machine Learning and AI as their top domain of choice.

The skills that defined a strong DevOps engineer three years ago are now table stakes. What differentiates professionals today is the ability to operate at the intersection of AI, cloud infrastructure, and intelligent automation.

Below are the critical skills to build and, more importantly, why each one directly determines your long-term relevance in an AI-augmented operations era.

1. AI/ML Fundamentals for Engineers

Understanding how machine learning models are trained, validated, and deployed is no longer optional for DevOps and IT operations professionals.

As AIOps platforms embed ML models into alerting, anomaly detection, and auto-remediation workflows, engineers who cannot interpret model outputs or worse, cannot recognize when a model is behaving incorrectly, become liabilities rather than assets.

Knowing the fundamentals of supervised learning, model drift, and inference pipelines allows you to configure AIOps tools intelligently, evaluate their reliability, and collaborate effectively with data science teams on MLOps workflows.

It also positions you to contribute to AI model deployment and monitoring, an emerging responsibility in AI-first engineering organizations.

2. Generative AI and Large Language Models (LLMs) for Operations

Generative AI is actively being used in DevOps environments to produce Infrastructure as Code, draft runbooks, summarize complex incident timelines, generate test scripts from plain-language requirements, and auto-complete CI/CD pipeline configurations.

Engineers who understand how LLMs work, their architecture, failure modes, hallucination risks, and their practical capabilities can deploy them as governed, reliable tools in production workflows.

Those who lack this understanding will find themselves dependent on outputs they cannot audit, correct, or trust in high-stakes incident scenarios. Learning Generative AI for business and LLM fundamentals gives you the technical authority to evaluate, configure, and ultimately govern AI tools embedded in your systems, rather than being governed by them.

To build a clear foundational picture, watch Key Skills to Kickstart your Career in Artificial Intelligence, a concise breakdown of AI competencies most relevant to technology professionals.

To further strengthen your skills in Generative AI and LLMs, enroll in the PG Program in Artificial Intelligence and Machine Learning at the University of Texas at Austin. The program goes well beyond tool usage; it gives you an applied, hands-on understanding of how these models are built, fine-tuned, and deployed in enterprise environments.

PG Program in AI & Machine Learning

Master AI with hands-on projects, expert mentorship, and a prestigious certificate from UT Austin and Great Lakes Executive Learning.

For DevOps and IT professionals who want real command over AI tools rather than surface-level familiarity, this is a rigorous, structured path backed by one of the world's leading universities.

3. Prompt Engineering for DevOps and IT Automation

Prompt engineering, the practice of crafting precise and context-rich instructions to generate reliable outputs from language models, is one of the most practical AI skills for operations professionals.

In DevOps, tasks such as generating Terraform modules, writing post-incident reviews, querying infrastructure costs, or summarising alerts all depend on prompt quality.

Engineers who approach prompt engineering as a structured skill, using techniques such as chain-of-thought reasoning, role-based prompting, output structuring, and few-shot examples, consistently achieve better results than those who treat it casually.

As AI copilots and automation tools become standard, this skill is quickly becoming as valuable as scripting.

To master this essential capability for your IT workflows, explore the free Prompt Engineering for ChatGPT course. By learning to construct high-fidelity prompts and executing advanced strategies like Chain of Thought (CoT) learning, you can force language models into step-by-step logical reasoning.

Free Prompt Engineering Course with Certificate

Learn prompt engineering for ChatGPT and improve accuracy with clear, effective prompts. Explore Generative AI, LLMs, and practical skills for content, coding, and real tasks.

4. Agentic AI and Autonomous Workflow Design

AI Agents and Agentic AI refer to systems that plan, reason, and execute multi-step tasks autonomously, operating as independent agents that handle complex workflows without requiring a human prompt at each step.

In IT operations, this is already manifesting as self-healing infrastructure agents, autonomous incident triage systems, and AI-driven capacity planning workflows that detect, diagnose, and remediate issues in sequence without waiting for engineer intervention. This is the next frontier of AIOps, and it is arriving fast.

IT operations professionals who understand how to design and govern agentic systems, defining their decision boundaries, setting escalation conditions, and ensuring appropriate human oversight at critical junctures will be the ones organizations trust to build and manage these capabilities.

Understanding agentic AI is no longer a future-facing investment; it is a present-day differentiator. To build expertise in this specific domain, explore the Certificate Program in Agentic AI from Johns Hopkins University.

You will learn to scale workflows by mastering Multi-Agent Systems (MAS) integrated with Small Language Models, establish crucial production observability for monitoring agent latency and costs, and evaluate system reasoning through DeepEval and Human-in-the-Loop (HITL) frameworks.

5. Machine Learning for Predictive Operations and AIOps

Understanding how machine learning models are trained, validated, and monitored gives DevOps and IT operations professionals a strong edge when working with AIOps platforms.

Skills such as distinguishing between supervised and unsupervised learning, identifying model drift in anomaly detection, and tuning ML-based alerting thresholds using historical data are now practical operational capabilities, not just data science concepts.

As AIOps tools integrate ML deeper into monitoring, incident management, and capacity planning, engineers who can evaluate and configure these models effectively will lead implementations.

This also creates opportunities in MLOps, which focuses on managing ML models in production and is emerging as one of the fastest-growing adjacent roles to DevOps.

To develop the strong technical foundation needed for predictive operations, take the Machine Learning Essentials with Python premium course.

Learn Machine Learning with Python

Learn machine learning with Python! Master the basics, build models, and unlock the power of data to solve real-world challenges.

By mastering core techniques like regression, classification, and ensemble methods, and learning to optimize model performance through cross-validation and hyperparameter tuning, you will gain the hands-on skills required to solve real-world data problems.

6. Cloud-Native AI Deployment and AWS Integration

Every AI model, LLM deployment, and AIOps platform runs on cloud infrastructure.

DevOps and IT operations professionals who can deploy and manage AI workloads on cloud native systems, including containerized model serving, managed ML services like AWS SageMaker and Bedrock, event-driven pipelines, and AI-focused observability, operate at the intersection of infrastructure and AI.

This goes beyond maintaining systems; it involves building the operational layer that makes AI scalable and reliable. Cloud computing is already a key upskilling priority, cited by 27 percent of professionals in the Great Learning report, and its importance will only grow as AI workloads drive enterprise cloud adoption.

The PG Program in Cloud Computing by the University of Texas at Austin is built for exactly this need, covering cloud architecture, AWS services, and DevOps integration in a curriculum co-developed with one of the world's leading cloud providers.

PG in Cloud Computing With AI Skills

Learn AWS, Azure & GCP with 80+ projects, 120+ services, expert mentorship & career support. Now with Applied AI on Cloud!

For IT operations professionals building toward AI-era infrastructure roles, this program provides the cloud-native foundation that underpins every AI deployment in production.

After owning your skills in AI and ML, you can use the tools and compilers on Great Learning to practice cloud and AI tooling hands-on, and explore the careers and roadmap resources to plan your learning path.

Will AI Replace DevOps Jobs?

AI automates tasks, not roles. DevOps engineers who understand the “WHY” behind automation system reliability, developer velocity, and cost efficiency will remain indispensable. The profession is evolving, not evaporating.

According to the World Economic Forum's Future of Jobs Report 2025, approximately 170 million new jobs are expected to be created globally by 2030, even as 92 million are displaced by automation. The net outcome favors adaptability.

1. Entry-Level Repetitive Roles May Decline

Scripting-heavy, ticket-driven, and manual configuration roles are most exposed. Many of the largest IT companies have already scaled back hiring at entry and mid-levels due to increased AI deployment, a trend reflected in the Great Learning report, which notes that B.Tech/B.E graduates are among the least optimistic about AI's impact on their careers (64% vs. the 78% average).

2. Demand for Skilled, Adaptive Professionals Will Rise

Roles in platform engineering, cloud architecture, SRE leadership, and AI-augmented DevOps are growing. The 91% of IT/ITES professionals who consider upskilling important are responding rationally to this market signal.

Refusing to adapt carries its own risks. As explored in Is Refusing to Adopt AI Tools at Work Damaging Your Career Growth?, inaction in the face of automation is not neutrality; it is a choice with real career consequences.

3. AI = Productivity Multiplier, Not a Replacement

AI removes toil, amplifies throughput, and accelerates feedback loops. Engineers who embrace it will do more with less. Those who resist it will increasingly find themselves outpaced not by AI, but by peers who use it effectively. The imperative to upskill with generative AI as an IT professional has never been more direct.

Want to map your path forward?

Watch DevOps Career Roadmap | DevOps Tutorial for Beginners for a structured view of how the role is evolving and where to focus your growth.

Moreover, explore Great Learning's careers and roadmap resources and take a quiz to assess where you currently stand. To accelerate practical learning, use the tools and compilers available on the Great Learning platform.

Conclusion

AI-powered automation is not coming for DevOps engineers; it is coming for the parts of their jobs that should have been automated years ago. What it cannot replace is the judgment, creativity, and contextual intelligence that experienced engineers bring to complex, ambiguous, high-stakes environments.

The professionals who will thrive are those who treat AI as a force multiplier: using LLMs to generate IaC faster, leveraging AIOps platforms to detect incidents earlier, and applying ML-driven observability to prevent failures before they occur.

Those who build skills in cloud-native engineering, platform architecture, and AI fundamentals will find themselves more valuable in an automated world.

The role of a DevOps engineer is not ending. It is being upgraded.